Artificial intelligence (AI) is driving critical decisions, from customer loan approvals to clinical diagnoses and predictive resource planning, but its black-box nature can erode trust when decisions can’t be explained. Explainable AI (XAI) solves this by revealing how and why AI models arrive at specific outcomes, enhancing accountability, transparency, and stakeholder confidence.

As businesses deploy increasingly complex AI systems in 2026, explainability isn’t optional, it’s a foundational part of responsible AI frameworks, AI governance, and ethical AI development. Enterprises that prioritise explainability gain not just compliance advantages but trust, resilience, and competitive edge.

What’s Inside

I. Why Explainable AI Matters for Enterprises in 2026

II. Explainable AI in Action: Mortgage Decisioning in Enterprise Finance

III. Beyond Individual Cases: 5 Powerful Explainable AI Use Cases

IV. Core Explainable AI Techniques & How They Work

V. Explainable AI in Enterprise: Governance, Trust & Compliance

VI. Explainable AI Implementation Best Practices

VII. Implementation Challenges & Solutions

VIII. The Future of Explainable AI (XAI) in 2026 and Beyond

Key Takeaways

- Growing regulatory and ethical demand for transparency

- Explainable AI techniques like SHAP and LIME

- Real-world explainability in high-stakes decisioning

- XAI supporting Responsible AI frameworks

- Explainable AI for enterprise governance and LLM applications

I. Why Explainable AI Matters for Enterprises in 2026

Explainable AI has become a board-level priority in 2026 as enterprises scale AI across finance, healthcare, operations, and customer experience.

With the rise of large language models and autonomous systems, AI decisions are becoming harder to interpret. IBM identifies lack of explainability as a major barrier to enterprise adoption, driven by trust gaps, regulatory risk, and accountability requirements. At the same time, regulations such as the EU AI Act are making transparent AI decision-making mandatory.

This is where Explainable AI in enterprise environments plays a critical role.

Explainable AI helps organisations build trust, demonstrate compliance, reduce bias, and strengthen Responsible AI governance, turning opaque models into accountable business systems.

To see what this looks like in practice, let’s start with one of the most established Explainable AI use cases: mortgage decisioning in financial services.

II. Explainable AI in Action: Mortgage Decisioning in Enterprise Finance

One of the most mature Explainable AI use cases today is in enterprise lending and credit decisioning.

Financial institutions increasingly rely on machine learning models to assess mortgage applications, credit risk, and affordability. While these models deliver high predictive accuracy, they often operate as black boxes, creating challenges around transparency, regulatory compliance, and customer trust.

According to IBM, lack of explainability remains a major barrier to enterprise AI adoption in financial services, particularly as regulations demand clear justification for automated decisions.

The Challenge: Opaque Loan Decisions

In traditional AI-driven lending, applicants may receive approvals or rejections without any visibility into contributing factors. This creates:

- Customer dissatisfaction

- Regulatory exposure

- Limited internal auditability

- Increased dispute resolution costs

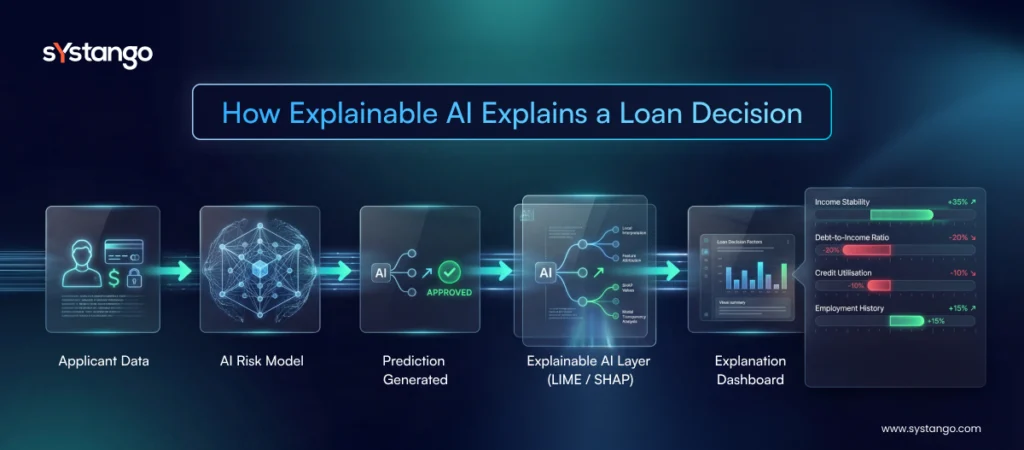

How Explainable AI Solves This

Using Explainable AI techniques such as LIME (Local Interpretable Model-agnostic Explanations), lenders can identify which variables most influenced each decision—such as income stability, debt-to-income ratio, employment history, or credit utilisation.

LIME works by generating local explanations around individual predictions, effectively answering:

Which inputs pushed this application toward approval or rejection?

Business Impact

In practice, this enables:

- Clear explanations for applicants

- Actionable improvement recommendations (for example, reducing credit utilisation)

- Faster regulatory reporting

- Improved internal governance

Most importantly, Explainable AI transforms opaque lending models into transparent decision systems, strengthening trust, reducing disputes, and supporting responsible AI adoption across financial operations.

III. Beyond Individual Cases: 5 Powerful Explainable AI Use Cases

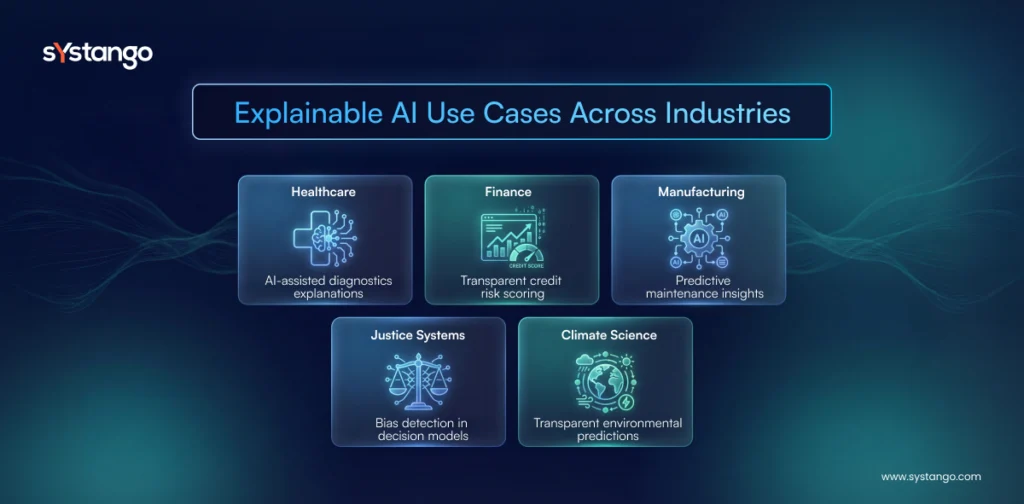

1. Healthcare: Transparent Diagnostics

Explainable AI can clarify why an AI model flagged a particular diagnosis, improving clinician trust and enabling safer, auditable decisions in patient care. Clinical research shows that XAI methods provide interpretable insights into imaging and pattern recognition tasks, crucial in safety-critical environments like radiology.

2. Finance: Fair Lending & Risk Transparency

In financial decision-making, transparent AI ensures fairness and compliance. As per the CFA Institute Research and Policy Center, Explainable AI systems help compliance teams trace how credit scores, income levels, and other variables influenced loan decisions, a must for institutional trust and regulatory review.

3. Criminal Justice: Bias Detection & Resource Allocation

AI systems predicting risk or sentencing outcomes must avoid entrenched biases. XAI reveals which factors drive predictions, allowing risk officers to identify and correct unfair patterns. This leads to more equitable decisioning and better alignment with ethical standards.

4. Manufacturing: Predictive Maintenance with Insight

Explainable predictive models can tell operations teams why an XAI system predicts imminent equipment failure, linking specific sensor anomalies to machine health. This clarity improves planning and reduces downtime risk.

5. Climate & Environmental AI

Environmental scientists use XAI to understand complex climate predictions, for example, which variables most influence extreme weather forecasts, enabling clearer mitigation strategies and trusted sustainability insights.

IV. Core Explainable AI Techniques & How They Work

SHAP (SHapley Additive exPlanations)

SHAP computes how much each feature contributes to a prediction, using cooperative game theory. It’s widely used because of its consistency and ability to compare feature impact across models.

LIME (Local Interpretable Model-agnostic Explanations)

LIME builds interpretable surrogate models around individual predictions, explaining why a specific decision was made, regardless of the original model’s complexity.

Counterfactual Explanations

These explainability outputs describe how input changes would alter outcomes, for instance, “If your income were 10% higher, this loan would be approved.”

Interpretability vs Explainability

Interpretability refers to models whose logic is intrinsically visible (e.g., decision trees). Explainability is broader, it includes tools that interpret complex models like deep neural networks.

V. Explainable AI in Enterprise: Governance, Trust & Compliance

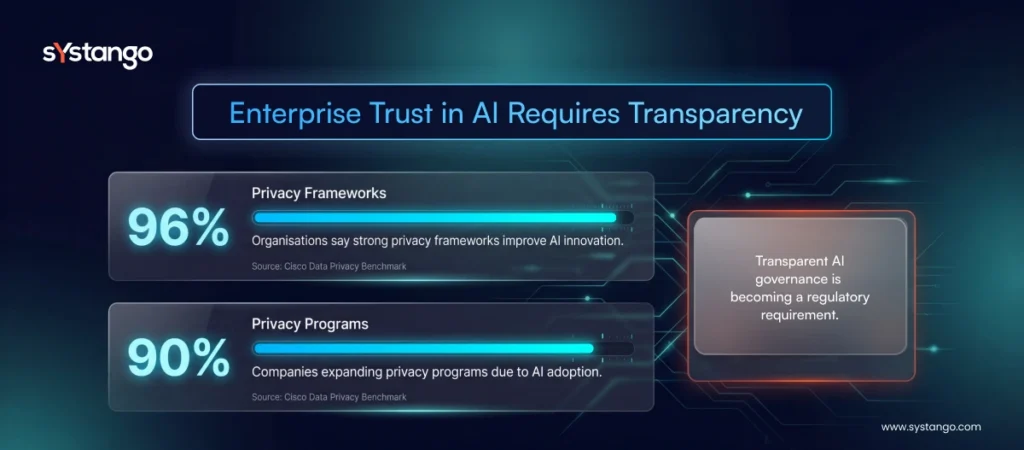

Explainable AI is core to transparent AI decision-making, especially under evolving regulatory environments like the EU AI Act, GDPR, and emerging global frameworks that require verifiable explanations for automated decisions.

According to Cisco’s 2026 Data and Privacy Benchmark Study, nearly 90% of organizations reported expanding privacy programs due to AI adoption, and 96% say strong privacy frameworks help unlock AI agility and innovation, which supports trust in AI-driven services.

For enterprises, XAI and AI governance in 2026 is not just technical but strategic, affecting risk, customer trust, brand reputation, and regulatory compliance.

While Responsible AI defines ethical principles, Explainable AI provides the operational layer that makes Responsible AI measurable and actionable in production systems.

VI. Explainable AI Implementation Best Practices

- Select Suitable Techniques: Use SHAP, LIME, and counterfactuals where most effective.

- Human-in-the-Loop: Combine automated explanations with domain expertise.

- Governance Integration: Align explainability tools with ethical AI frameworks and compliance policies.

- Metrics: Track clarity, fairness, and actionability of explanations.

- Tooling: Embed explanations into dashboards used by auditors and business users.

VII. Implementation Challenges & Solutions

1. Complex Models vs Clarity

Complex deep learning models can be hard to explain; hybrid strategies using interpretable layers plus post-hoc explanations can help.

2. Explainable AI for LLMs

Explainability for large language models remains challenging, but research is advancing approaches that link LLM outputs to traceable tokens and attention patterns.

In large language models, Explainable AI helps enterprises understand prompt influence, output attribution, and reasoning pathways, making XAI essential for production LLM deployments.

3. Accuracy vs Interpretability

Balancing performance and explainability often requires trade-offs; choosing the right technique based on use case is key.

VIII. The Future of Explainable AI (XAI) in 2026 and Beyond

Explainable AI isn’t static, it’s evolving:

- Personalised Explanations: Tailored reasoning for stakeholders, not generic outputs.

- Systems-Level XAI: Beyond individual models to explain entire AI ecosystems.

- Human-AI Collaboration: XAI will enable humans to guide AI in real time.

- Responsible AI Development: Ethical AI frameworks will embed explainability from design to delivery.

- Democratised XAI Tools: User-friendly explainability platforms for all levels of users.

In 2026, explainability is part of trustworthy AI, a concept encompassing transparency, fairness, accountability, and privacy, essential for widespread adoption.

Strategic Summary

This article explores Explainable AI 2026 – why it matters for modern enterprises, how it supports responsible and transparent AI governance, and what practical Explainable AI use cases look like in finance, healthcare, manufacturing, climate analytics, and beyond. You’ll learn about core Explainable AI techniques (SHAP, LIME, Counterfactuals), see a concrete mortgage decision Explainable AI example, understand implementation challenges, and discover future possibilities for Explainable AI in enterprise systems. The guide also highlights how AI consulting services and AI development services help organisations adopt XAI with trust, compliance, and ethical rigour.

Conclusion

Explainable AI has moved from academic interest to an enterprise imperative. In 2026, transparent AI decision-making is no longer a “nice-to-have”, it’s core to compliance, trust, and operational excellence.

By embracing Explainable AI 2026 frameworks, techniques like SHAP and LIME, and responsible governance practices, organisations can unlock the full value of AI while managing risk, enhancing trust, and improving outcomes across industries.

At Systango, we help enterprises implement XAI with robust AI consulting services and AI development services that align governance, explainability, and business value, ensuring AI systems are not only powerful but also understandable, trustworthy, and responsible across modern AI software development initiatives.

Executive Summary

Explainable AI (XAI) is becoming essential for enterprises in 2026 as organizations increasingly rely on AI to make high-impact decisions in finance, healthcare, operations, and customer experience. Traditional “black-box” AI models often lack transparency, creating trust, compliance, and governance challenges. XAI addresses this by revealing how and why AI systems reach specific outcomes, enabling accountability and regulatory alignment. Through techniques such as SHAP, LIME, and counterfactual explanations, businesses can interpret complex models and provide clear reasoning behind automated decisions. Real-world applications—from mortgage lending and clinical diagnostics to predictive maintenance—demonstrate how explainability improves trust, fairness, and operational insight. As global regulations tighten, integrating XAI into Responsible AI frameworks will be critical for building transparent, compliant, and trustworthy enterprise AI systems.