Enterprises are scaling generative AI faster than their governance models can handle.

What began as experimentation is now embedded across legal operations, ESG reporting, customer support, and multi-cloud infrastructure. Yet most organisations are deploying AI on security architectures never designed for autonomous models.

This is no longer a technical gap — it is a board-level risk.

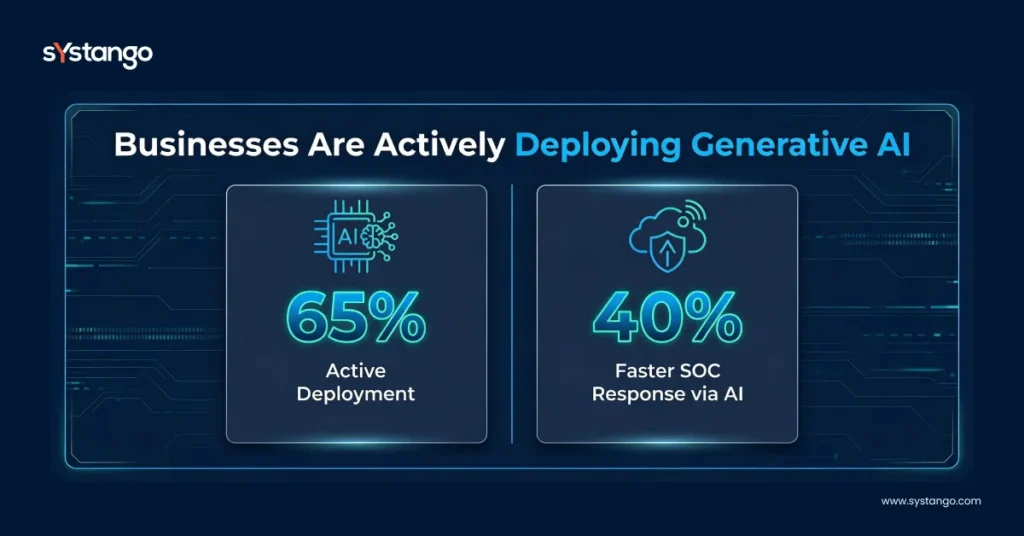

According to McKinsey, over 65% of organisations are deploying generative AI in at least one function.

AI is accelerating value creation.

But it is also expanding the enterprise attack surface.

In 2026, the real competitive advantage is not adopting AI first — it is securing it properly.

What’s Inside

I. Why AI Security Is Now a Strategic Priority

II. The Real Threat: LLM Security Risks in 2026

IV. The Solution: AI-Native Cybersecurity Architecture

VI. Business Impact: ROI of AI Security Investment

VII. Implementation Framework for CIOs & CTOs

Key Takeaways

- AI-powered cloud security solutions are essential for scaling GenAI safely.

- Enterprises face growing LLM security risks, including prompt injection, data leakage, and shadow AI.

- A structured AI governance framework 2026 is mandatory for compliance and board oversight.

- Zero Trust architecture with AI must extend to AI workloads.

- AI improves cybersecurity — but only with AI-native architecture and lifecycle security controls.

I. Why AI Security Is Now a Strategic Priority

Generative AI is embedded across:

- Legal automation

- Customer support copilots

- ESG reporting systems

- Autonomous AI agents

- Multi-cloud enterprise platforms

Security is no longer perimeter-based. It is model-based.

II. The Real Threat: LLM Security Risks in 2026

When enterprises ask, “Is generative AI safe for enterprise use?” — the answer depends on architecture.

The Top LLM Security Risks

- Prompt injection attacks

- AI data poisoning

- Model supply chain vulnerabilities

- AI hallucination causing compliance breaches

- Shadow AI across business units

- AI attack surface expansion in multi-cloud environments

Without AI risk management, GenAI adoption becomes unmanaged exposure.

Percentages visual here

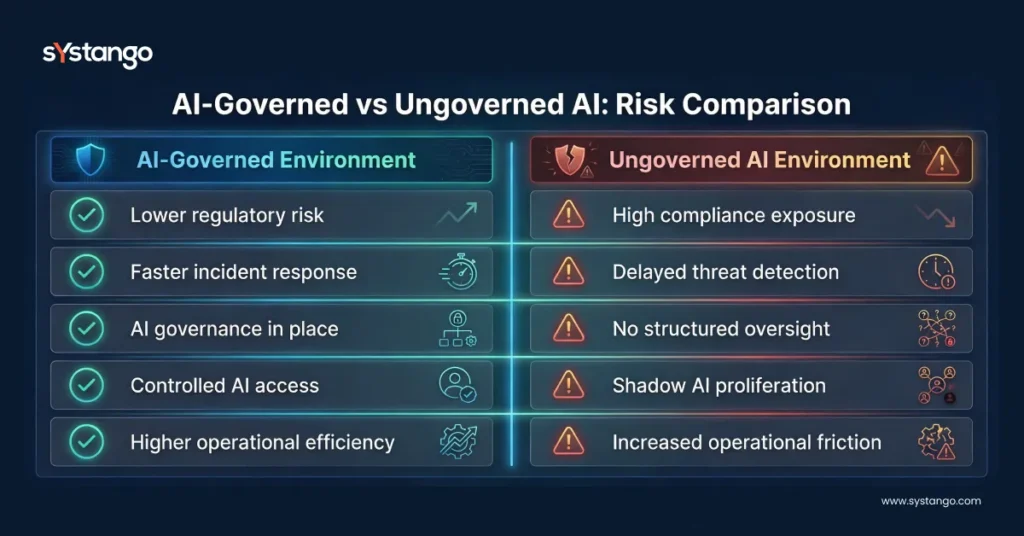

III. The Governance Gap

Many enterprises still lack a formal Enterprise AI security strategy.

As generative AI scales across departments, governance often lags behind deployment. The result is fragmented oversight and unmanaged risk.

- Without structured AI auditing, organisations expose themselves to regulatory fines and compliance breaches.

- Without model validation, hallucination-driven outputs can lead to legal, financial, and reputational damage.

- Without AI access management, insider threat risk escalates.

- Without compliance mapping, exposure under the EU AI Act and GDPR becomes inevitable.

This is the hidden cost of rapid AI adoption.

Governance must precede scale — not follow it.

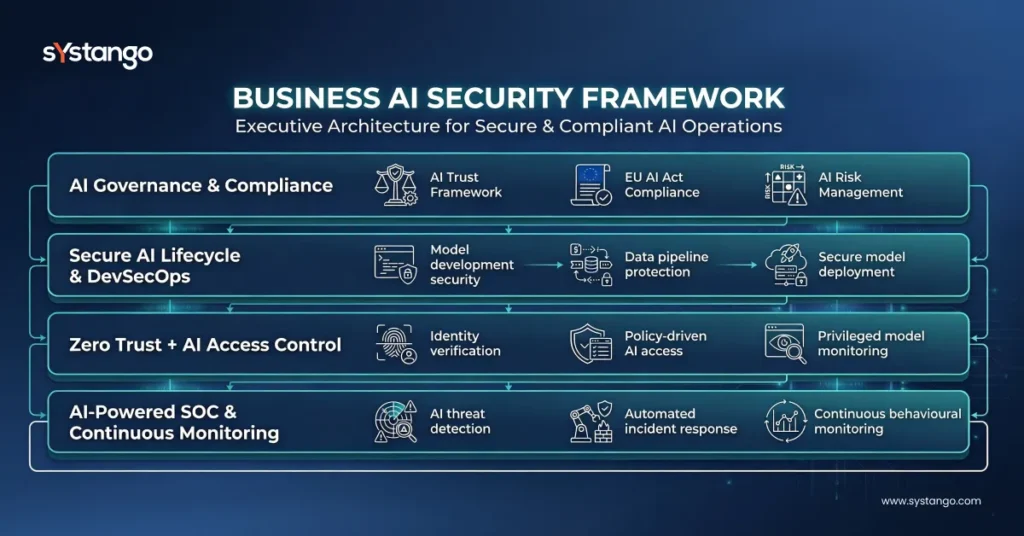

IV. The Solution: AI-Native Cybersecurity Architecture

Retrofitting traditional cloud security models onto AI systems does not work.

Enterprises must implement:

1️⃣ AI Governance & Compliance Layer

A formal AI governance framework 2026 should include:

- AI trust framework

- Secure AI lifecycle controls

- Model validation and explainability

- AI compliance checklist for enterprises

- Alignment with GDPR, EU AI Act, ISO 27001

This establishes accountability at board level.

2️⃣ Secure AI Lifecycle & DevSecOps Integration

Through AI DevSecOps services, enterprises embed security into:

- Model training

- Deployment pipelines

- Monitoring layers

- AI observability and anomaly detection

This is foundational for Secure AI application development.

3️⃣ Zero Trust + AI Integration

Zero Trust architecture with AI ensures:

- Identity-based access

- Behavioural monitoring

- Continuous verification

- Least-privilege AI access management

AI models should never be implicitly trusted — even internally.

4️⃣ AI-Powered Cloud Security Automation

Modern Cloud security with AI automation enables:

- AI-driven threat detection

- Automated SOC workflows

- AI threat modelling

- Continuous AI auditing

This transforms reactive defence into predictive defence.

AI Security framework visual here

V. Proof: Secure AI at Scale

AI Carbon Intelligence Platform

Systango implemented AI-native cloud architecture with embedded governance and lifecycle controls.

Business Outcomes:

- 30% reduction in carbon emissions

- 45% increase in eco-friendly adoption

- 60% improvement in platform engagement

- 50% growth in B2B onboarding efficiency

This demonstrates how AI cloud security consulting services enable both sustainability and scalability.

VI. Business Impact: ROI of AI Security Investment

Investing in AI-powered cloud security solutions delivers:

- Reduced breach probability

- Lower regulatory exposure

- Accelerated AI time-to-market

- Improved operational efficiency

- Increased executive confidence

Risk of inaction:

- EU AI Act penalties

- Reputational damage

- Shadow AI proliferation

- Technical debt accumulation

- AI model compromise

In 2026, insecure AI is an enterprise liability.

Risk Comparison Visual here

VII. Implementation Framework for CIOs & CTOs

To secure generative AI applications:

- Conduct AI risk assessment across cloud environments

- Define formal AI compliance & responsible AI policy

- Extend Zero Trust to AI workloads

- Implement model monitoring & AI auditing

- Establish board-level AI oversight

This forms a complete AI security framework for CIOs.

Conclusion

The winners in 2026 will not be the fastest AI adopters.

They will be the most secure.

Generative AI in cloud security demands structured governance, Zero Trust integration, and AI-native cybersecurity architecture.

Systango partners with enterprises to design and implement secure, compliant, and scalable GenAI ecosystems — combining governance strategy, secure cloud architecture, and lifecycle AI security engineering.

Secure your AI transformation before it becomes your next risk vector.

Executive Summary

In 2026, enterprises scaling generative AI face escalating LLM security risks, regulatory exposure under the EU AI Act, and expanded cloud attack surfaces. While AI improves productivity and threat detection, unsecured AI systems introduce prompt injection, data leakage, compliance breaches, and shadow AI proliferation.

To mitigate risk, organisations must adopt an AI-powered cloud security strategy built on structured AI governance, Zero Trust integration, secure AI lifecycle controls, and AI-native cybersecurity architecture.

This article outlines a practical Enterprise GenAI governance framework, explains how to secure generative AI applications in production, and demonstrates how AI-driven threat detection reduces operational and regulatory risk.

Systango partners with enterprises to design and implement secure, compliant, and scalable GenAI ecosystems — combining governance strategy, AI engineering, and cloud-native security architecture to enable confident AI adoption.